To celebrate its 2021 partnership with the Mercedes-AMG F1 team, AMD worked with The Pixelary to create an incredible 3D animation of the championship-winning car, with help from Blender and some AMD Radeon Pro graphics cards

In 2021, Formula One fans witnessed one of the most exciting seasons of racing in history, going right to the wire in the final race. Sponsored by AMD, the championship-winning car, the Mercedes-AMG F1 W12 E Performance is a piece of highly tuned engineering. To mark the occasion, AMD enlisted The Pixelary to create a stunning visual.

However, instead of using its Radeon ProRender rendering engine plug-in with Blender (as used previously in 2020), this time, the animation was created in Blender 3.0, showcasing the high-speed performance of two AMD Radeon Pro W6800 graphics cards with Blender 3.0’s updated Cycles renderer.

“Cycles X gave users a big performance uplift,” says The Pixelary head Mike Pan. “At the same time, the old OpenCL back end in Blender 2.9x has been replaced by the AMD HIP API [Heterogeneous-computing Interface for Portability]. In a nutshell, HIP allows Cycles to be developed using a single unified code path for AMD and Nvidia GPUs, and CPUs.

Pan explains that, for designers, this means better feature parity between CPU and GPUs from different vendors. Most importantly, AMD HIP has brought even faster rendering speeds for supported AMD Radeon graphics cards, currently validated on W6800 and RX 6000 series desktop GPUs and enabled on other AMD RDNA and RDNA 2 architecture graphics cards.

Having previously created 60 wallpapers of the previous season’s car using Radeon ProRender, The Pixelary had a lot of ideas for how it wanted to bring the car to life in an animation.

Cycles adaptation

The first step was adapting the car asset originally made for Radeon ProRender to Cycles. “Luckily, our Blender Radeon ProRender project already uses Blender’s native shader network, so the materials just work in Cycles. The only change was some ray visibility flags that had to be set for the details on the car,” Pan explains.

This helped with ensuring that additional surface details such as panel gaps and screws to the bodywork didn’t cast unwanted shadows or reflections that would make them look like they were floating. Adding details this way is a lot more flexible, says Pan, and doesn’t require the car body to be split up into as many pieces.

With the assets ready, it was time to get creative achieving the desired results. “We wanted to come up with shots that look great and use as many available features as Cycles has to offer. Volumetrics, large number of lights, and motion blur were the top three picks, asthese have been traditionally challenging features for many render engines.”

Since the car is predominantly black and very shiny, most of its shape comes from specular reflections.

“Blender 3.0’s much-improved viewport rendering performance, combined with the speed of GPU rendering, made experimenting with lighting a joy,” says Pan, adding that the process was further sped up by seeing in real time how different lights fall on the car.

“We did the initial pass in Blender’s OpenGL Eevee renderer, then switched over to Cycles once we achieved a look that we liked and continued the refinement process.”

For the paddock shot, Pan says Eevee’s output is astonishingly similar to the Cycles render. “For other shots like the track, the lack of real ray tracing in Eevee is evident, but it still gave us a good approximation to set up the shot.”

Final renders

The final renders were done at 4K with high sample counts to achieve the highest quality possible. Denoising was added to further smooth out the image. “Using AMD HIP, there isn’t even the usual initial delay while the render kernel compiles, because the HIP kernel comes with Blender. This means rendering starts immediately as soon as we hit F12,” says Pan.

The Pixelary boss says that performance gain from Blender 2.93 to Blender 3.0 saw render time almost cut in half simply by switching Blender versions. Using two GPUs at the same time further cut down the render time by half.

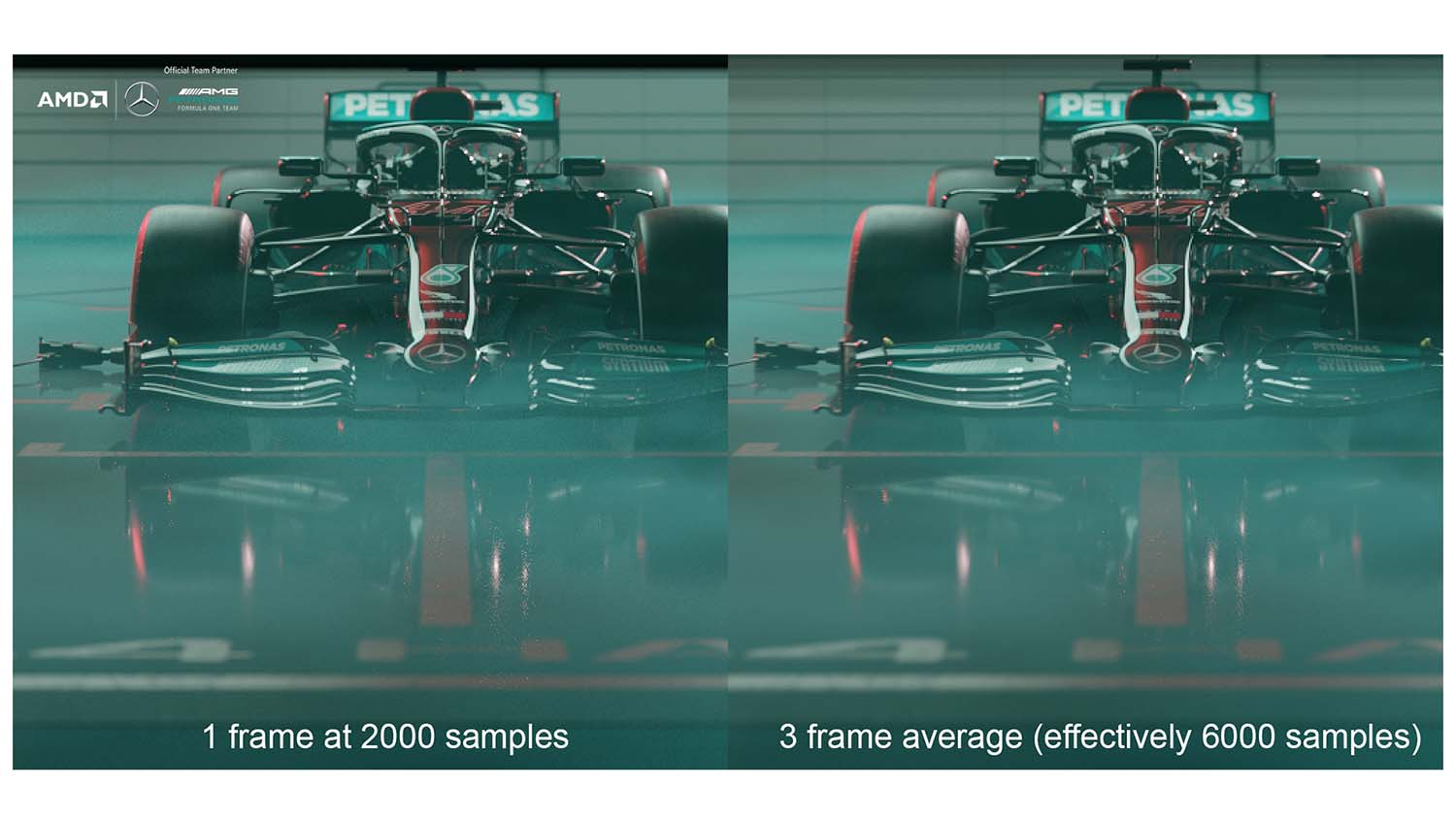

“Not everyone will have access to top-of-the-line GPUs, so we investigated how we could have further improved our rendering time by using temporal denoising. This was not used for the final animation in the video,” says Pan.

“The basic idea behind temporal denoising is to render each frame at a lower sample count, and then merge multiple frames to reduce the noise. This approach works extremely well for low-motion shots, since the frame-toframe difference is small.

“Despite this, simply blending the frames together will still result in ghosting, so we used motion vector data to merge the frames in a more intelligent way using a wellknown trick.”

As a result, by mixing three frames together and rendering each frame at one-third the sample count, Pan explains that the team was able to effectively reduce the render time by three times with virtually no artefacts.

The final video was put together using Blender’s Sequencer, adding to the amazing legacy of the car that will accompany memories of that dramatic season.