Researching the design tools and technologies of tomorrow and beyond, we look at the transdisciplinary work of Bristol University’s Design and Manufacturing Futures Lab

The projects being worked on at the Design and Manufacturing Futures Lab at the University of Bristol are an intriguing blend of far-term futuristic and present-day pragmatic.

Set up in 2015, the lab falls under the umbrella of Engineering Systems, Design and Innovation (ESDI), a department researching and applying cuttingedge science with design thinking and systems thinking. Findings are then married up with industry links to enable them to transfer into real-world use cases.

“What differentiates us as a research group is that you can’t design it if you don’t know how you’re going to make it,” says Professor Ben Hicks, the lab’s founder and head of ESDI. “There are a lot of design groups and a lot of manufacturing groups, but we are one of the few design AND manufacturing groups.”

The pipeline of projects is varied, explains Hicks. Groups are looking at technologies like quantum computer-aided engineering, and reimagining how CFD, FEA and generative design tools might operate using quantum capabilities. That’s a long-term project, he says, but the team is also looking to the shorter term and at today’s needs, too.

“We’re really looking at that physical-digital [interface] and trying to look at, I wouldn’t say democratising, but providing tools, technologies and methods that are affordable and deliverable with a cost/benefit trade-off,” he says.

Within the lab, there are around seven lecturing staff and professors, and a mix of 14 to 15 PHDs, doctoral students and PDRSs (post-doctoral researchers) at any one time, as well as a couple of industry fellows. It’s a very transdisciplinary set-up, with a team that includes software engineers, circuitry designers and business school graduates.

Physical-digital prototyping is a priority for the group, which has plans to extend how designers can interact and manipulate prototypes, synchronising and reconfiguring them, using technologies like lightweight real-time analytics to assess their performance.

With product development taking careful first steps with new immersive technologies, teams like the Design and Manufacturing Futures Lab are finding ways to make future technologies a part of today’s workflows, and creating solutions for some of the gaps they leave. To give readers a flavour of some of this work, here are details of three projects.

1. Remanufacture for generative design

This project coupled the capability to remanufacture with that of generative design. The idea here was to enable users to computationally explore and ‘upgrade’ existing products. The goal: to prolong an existing product’s lifespan and offer improved performance, comfort or more general personalisation.

The project took an old bicycle crank arm to show the concept in practice. The original part was 3D scanned, key features were extracted and preserved. Finally, the part was optimised with generative design. In constraining the generative solutions to work within existing geometry, with a new set of design requirements like forces, safety and so on, the ‘old’ product can then form the billet for remanufacture.

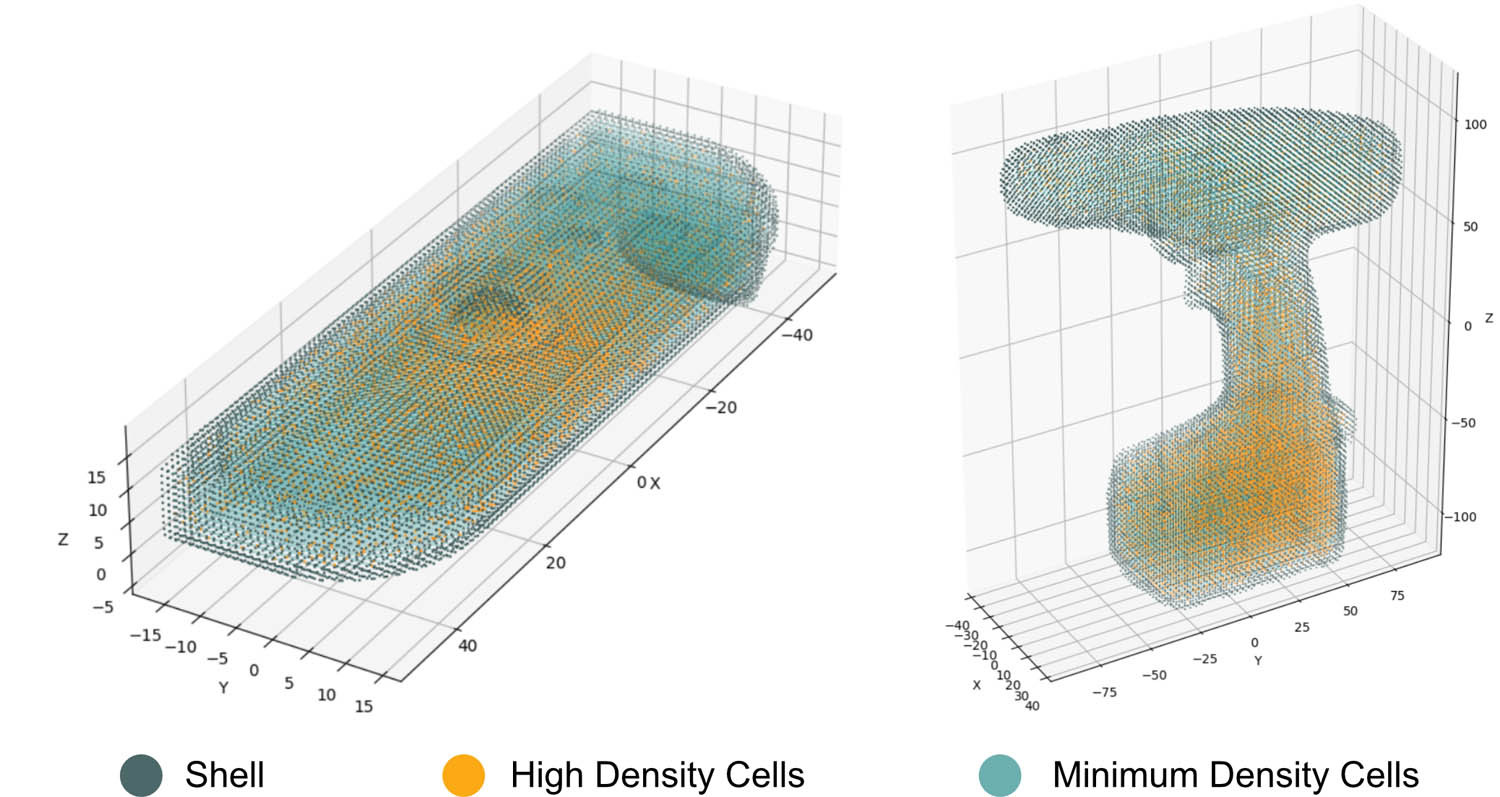

2. Emulating density in 3D-printed models

3D-printed prototypes might reflect the exact dimensions of a final product, but they typically have significantly different mass properties. This is especially true in earlystage form models, which often look to save time and material costs.

While this isn’t always important, real-world product weighting can seriously impact a design and change the feel of a product.

This project arrived at a method through which mass properties can be emulated in 3D printing. Written in Python, the code produces a cell-wise breakdown that highlights where mass needs to be placed to best emulate the desired mass properties.

Initial results have been promising, with several case studies considered, including a handheld games console and an electric drill. In each of these cases, the mass error proved less than 1 gram, and the centre of mass position error was less than 1 millimetre.

3. Digital prototyping mirror

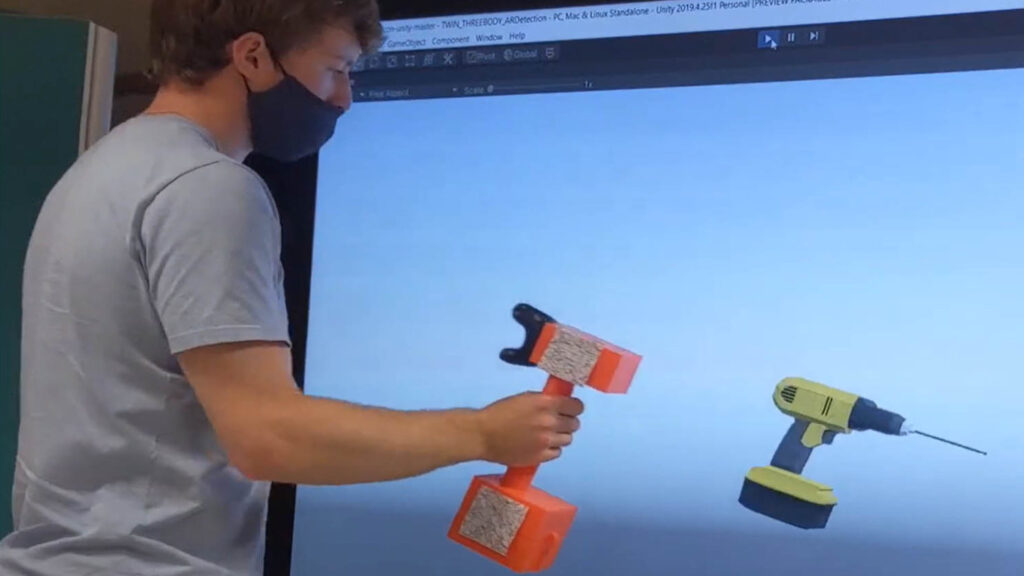

Using virtual and augmented reality tracking technologies, users can manipulate a physical prototype directly and see their actions replicated on the digital version, giving them a way to interact with an as-final prototype, right from the earliest concept stages of a design process.

Initially using a technique known as a digital mirror, where the digital model is synchronised on a screen as if a reflection of a physical prototype were held in front of it, users can move the physical object while seeing themselves in the screen holding and using the as-final version. They can also reconfigure the physical version with new geometry, handles or parts, and see the changes replicated in as-final form in the reflection. It’s easy to imagine this project advancing into full AR, while emulating model density to feel the difference to the object in their hand.